CTRL + ALT + TEACH: Faculty Relationships with AI

Brad Garner, PhD, Digital Learning Scholar in Residence, Office of Academic Innovation, Indiana Wesleyan University

“The decisions made by faculty, instructional designers, and students today will significantly influence the trajectory of Artificial Intelligence development. Therefore, higher education must understand the technology and take a leadership role in shaping how it will be developed and integrated into our lives” (Cangialosi, 2024).

Artificial intelligence (AI) has rapidly become one of the most talked-about—and polarizing—topics on college campuses. Heralded by some as a revolutionary tool and by others as a threat to academic integrity and human connection, AI is reshaping conversations about the future of higher education. Faculty and administrators alike are seeking thoughtful ways to navigate this evolving terrain, balancing innovation with integrity.

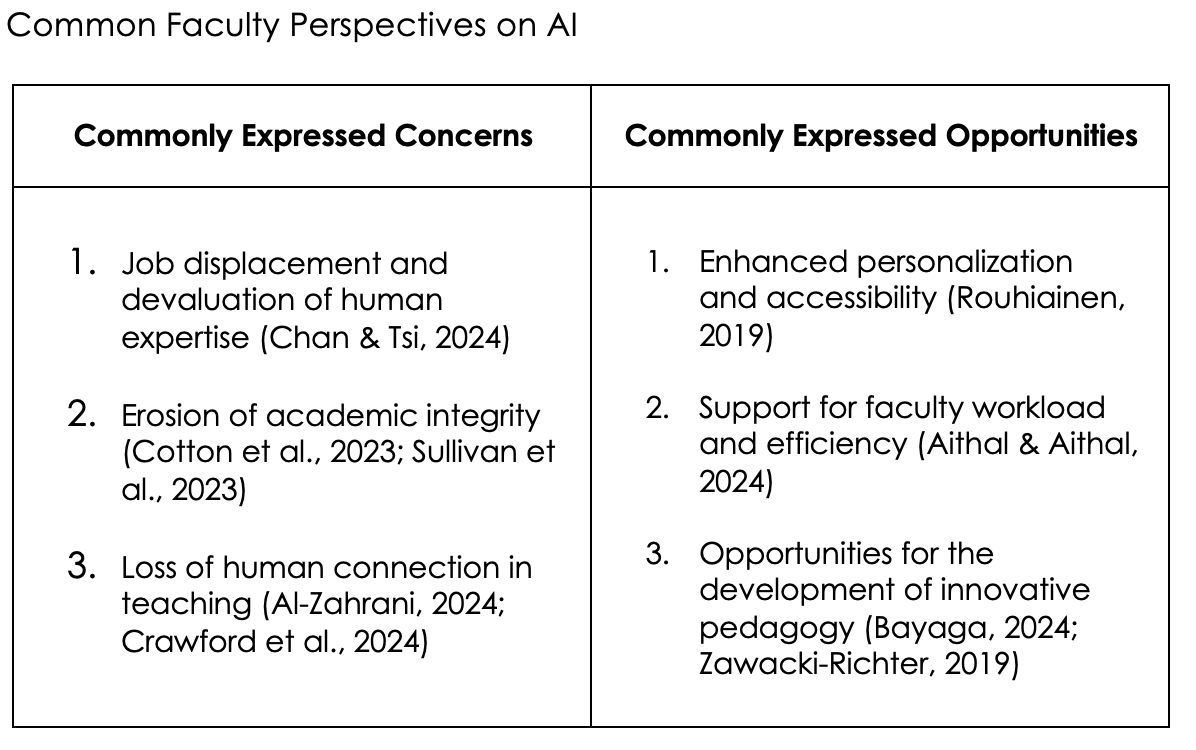

Table 1

As AI evolves, educators must engage in open, reflective dialogue about its implications. Discussing hopes and concerns with trusted colleagues can lead to more informed and balanced approaches to integrating AI into academic practice. Educator attitudes toward AI often fall into five broad mindsets:

Critical – Skeptical of AI’s benefits and concerned about ethical or pedagogical implications.

Confused – Struggling to understand AI’s functions, limitations, and potential impact.

Curious – Eager to explore AI’s possibilities and committed to learning more.

Collaborative – Willing to work with others (and with AI tools) to redesign and enhance teaching and learning.

Creative – Inspired by AI’s potential for educational innovation and experimentation.

By reflecting on these varied mindsets, educators can better articulate their stance and begin mapping a personal and professional path forward in the age of AI.

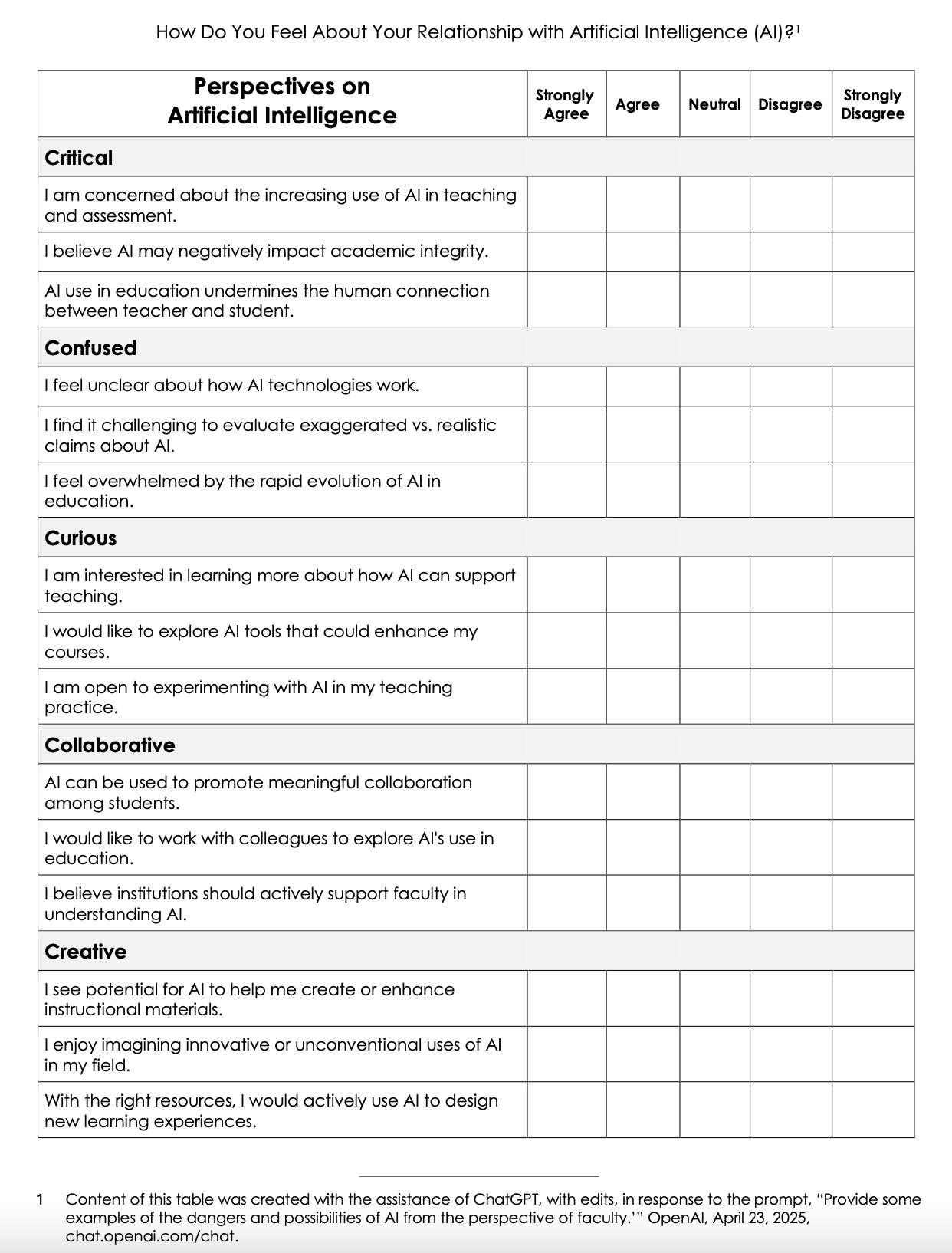

Table 2

Beyond our initial mindsets about AI—whether skeptical or enthusiastic—lies the deeper challenge of implementation: How do we design learning environments that thoughtfully respond to the presence and potential of AI?

Perkins et al. (2024) propose a tiered framework for understanding and applying AI in educational settings. These levels offer a continuum of engagement strategies that faculty can adopt or adapt to fit their specific course goals:

No AI – Students are explicitly instructed not to use AI tools for a given assignment or assessment. This level often applies to tasks where original thoughts, process integrity, or skill development is the primary objective.

Example: Students are asked to write a reflective essay analyzing their personal experiences related to cultural identity.

AI Planning – AI may be used during the early stages of the creative process—brainstorming, outlining, or organizing—but students must disclose how they used AI and articulate its influences on their final product.

Example: Students preparing a research proposal on climate change may use AI tools to brainstorm topic ideas, outline the structure, and organize their thoughts. They must explicitly disclose the AI tools used and clearly describe how AI informed their approach.

AI Collaboration – Students engage AI tools as co-creators, using them to draft, refine, and even evaluate their work. This collaborative model emphasizes transparency, critical thinking, and revision.

Example: Students writing a business plan utilize AI tools to generate initial drafts of market analyses, marketing strategies, and financial forecasts. They critically review and revise AI-generated text, documenting each step and explaining their modifications and decisions clearly in their submissions.

Full AI – Students use AI’s generative capabilities, engineering prompts, and iterative feedback to achieve their learning goals. Critical engagement is expected and assessed.

Example: Students in a digital storytelling course craft narratives entirely with generative AI, creating detailed prompts and refining the outputs iteratively. They document their prompting strategies, evaluate various AI-generated drafts, and submit both the final story and a reflection detailing their critical engagement and learning outcomes.

AI Exploration – The most open-ended level, where students are encouraged to experiment creatively with AI to generate novel insights, approaches, or solutions beyond conventional thinking.

Example: In an interdisciplinary design course, students are encouraged to explore generative AI tools creatively to conceptualize innovative solutions to urban sustainability challenges. Students might experiment freely with diverse AI applications—from architectural visualization to predictive analytics—and present a detailed reflection on their exploratory process, novel findings, and how these insights have pushed their thinking beyond conventional limits.

These tiers are not rigid rules but adaptable models that allow pedagogical flexibility across disciplines and contexts.

Practical Steps for Faculty: Navigating AI in the Classroom

Regardless of one’s stance on AI, educators must recognize that the technology is already woven into students’ academic, personal, and professional lives. As such, faculty are uniquely positioned to serve as informed guides in helping students make ethical and practical use of these tools. Here are a few ways to begin:

Try out AI tools: Explore free or trial versions of AI platforms, such as ChatGPT, Claude AI, Google AI Studio, NotebookLM, and Synthesia. Use this exploration to evaluate potential applications in your teaching.

Talk with your students: Research shows students often use AI more frequently than faculty realize. Discussing their habits and perceptions can uncover gaps and guide ethical expectations.

Engage with campus committees: Stay involved with institutional discussions around AI policies, best practices, and academic integrity. Aligning your syllabus with campus-wide norms ensures consistency and clarity.

Reflect on AI’s role in your courses: Consider which curriculum elements might benefit from AI-enhanced learning—such as feedback loops, content generation, or language support—while maintaining academic rigor.

Avoid the "One-Size-Fits-All" trap: AI is not a magic solution; it’s a tool. As Abraham Maslow (1966) observed, “When you are a hammer, everything looks like a nail” (p. 15). Not every problem requires a hammer, and not every assignment should be AI-enabled. Use your disciplinary knowledge and teaching experiences to decide where and when AI adds value.

By treating AI as both a pedagogical opportunity and a subject of inquiry, faculty can model the thoughtful, critical engagement that higher education seeks to instill in students.

References

Aithal, P. S., & Aithal, S. (2024). Optimizing the use of artificial intelligence-powered gpts as teaching and research assistants by professors in higher education institutions: A study on smart utilization. SSRN Electronic Journal. https://doi.org/10.2139/ssrn.4729191

Al-Zahrani, A. M. (2024). Unveiling the shadows: Beyond the hype of AI in education. Heliyon, 10(9). https://doi.org/10.1016/j.heliyon.2024.e30696

Bayaga, A. (2024). Leveraging AI-enhanced and emerging technologies for pedagogical innovations in higher education. Education and Information Technologies, 30(1), 1045–1072. https://doi.org/10.1007/s10639-024-13122-y

Cangialosi, K. (2024, July 15). Educators must take a leadership role in AI literacy. Every Learner Everywhere. https://www.everylearnereverywhere.org/blog/educators-must-take-a-leadership-role-in-ai-literacy/

Chan, C. K., & Tsi, L. H. Y. (2024). Will generative AI replace teachers in higher education? A study of teacher and student perceptions. Studies in Educational Evaluation, 83, 101395. https://doi.org/10.1016/j.stueduc.2024.101395

Cotton, D. R. E., Cotton, P. A., & Shipway, J. R. (2023). Chatting and cheating: Ensuring academic integrity in the era of ChatGPT. Innovations in Education and Teaching International, 60(3), 325–336. https://doi.org/10.1080/14703297.2023.2190148

Crawford, J., Allen, K.-A., Pani, B., & Cowling, M. (2024). When artificial intelligence substitutes humans in higher education: The cost of loneliness, student success, and retention. Studies in Higher Education, 49(5), 883–897. https://doi.org/10.1080/03075079.2024.2326956

Maslow, A. H. (1966). The psychology of science: A reconnaissance. Harper & Row.

Perkins, M., Furze, L., Roe, J., & MacVaugh, J. (2024). The artificial intelligence assessment scale (AIAS): A framework for ethical integration of generative AI in educational assessment. Journal of University Teaching and Learning Practice, 21(06). https://doi.org/10.53761/q3azde36

Rouhiainen, L. (2019). How AI and data could personalize higher education. Harvard Business Review. http://hbr.org/2019/10/how-ai-and-data-could-personalize-higher-education

Sullivan, M., Kelly, A., & McLaughlan, P. (2023). ChatGPT in higher education: Considerations for academic integrity and student learning. Journal of Applied Learning & Teaching, 6(1), 1–10. https://doi.org/10.37074/jalt.2023.6.1.17

Zawacki-Richter, O., Marín, V. I., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence applications in higher education – where are the educators? International Journal of Educational Technology in Higher Education, 16(1). https://doi.org/10.1186/s41239-019-0171-0

How to cite this article:

Garner, B. (2025). CTRL + ALT + TEACH: Faculty relationships with AI. Insights for College Transitions, 21(1).

Well-written piece, Brad! I was in a teaching and learning session on the use of Chat GPT, and the facilitator encouraged us to "take notes" when using Chat GPT (versus copying and pasting the generated response). She said it helps you become more selective with what you use and ensures your final paper is your own work.